How to Replace Sora with Seedance2.0: Which One Costs Less in 2026?

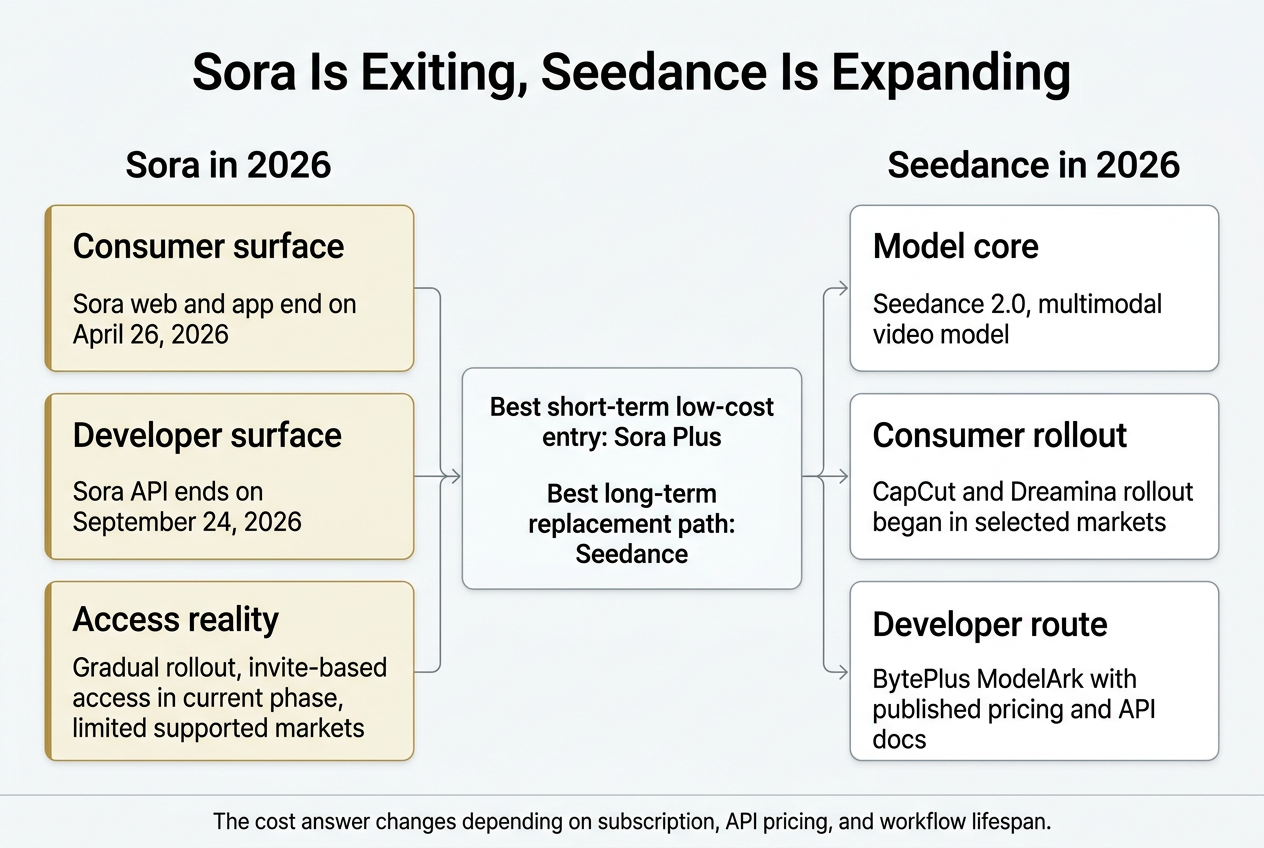

If you mean official access in mid-April 2026, the clean answer is this: Seedance is the more realistic long-term replacement, but it is not automatically the cheaper option. Sora’s web and app experiences are scheduled to end on April 26, 2026, and the Sora API is scheduled to end on September 24, 2026. Seedance, by contrast, is still expanding across ByteDance surfaces and BytePlus API access. But “which one costs less” changes depending on whether you mean a consumer subscription, API generation cost, or the total cost of keeping a workflow alive after Sora’s sunset. (OpenAI Help Center)

The biggest mistake in most Sora versus Seedance writeups is that they compare the wrong layers. OpenAI’s Sora can mean a ChatGPT-linked subscription surface, the Sora app, or the API. Seedance can mean ByteDance’s model itself, a phased rollout in products like CapCut and Dreamina, or BytePlus ModelArk for developers. Those are not billed the same way, they are not available in the same places, and they are not equally stable going forward. (ByteDance Seed)

A second problem is that even official documentation is in motion. OpenAI’s Help Center still has one Sora article saying there is no API access, while OpenAI’s developer docs list sora-2 and sora-2-pro as API models and publish a formal shutdown date for them. OpenAI’s Sora Billing FAQ also describes Pro as supporting up to 20-second videos, while OpenAI’s Sora release notes say all users can generate 15-second clips and Pro users can generate 25-second storyboard videos on web. If you are budgeting or migrating, the only safe approach is to read the product-surface-specific documentation for the exact surface you plan to use and treat older overview pages carefully. (OpenAI Help Center)

The practical answer in one paragraph

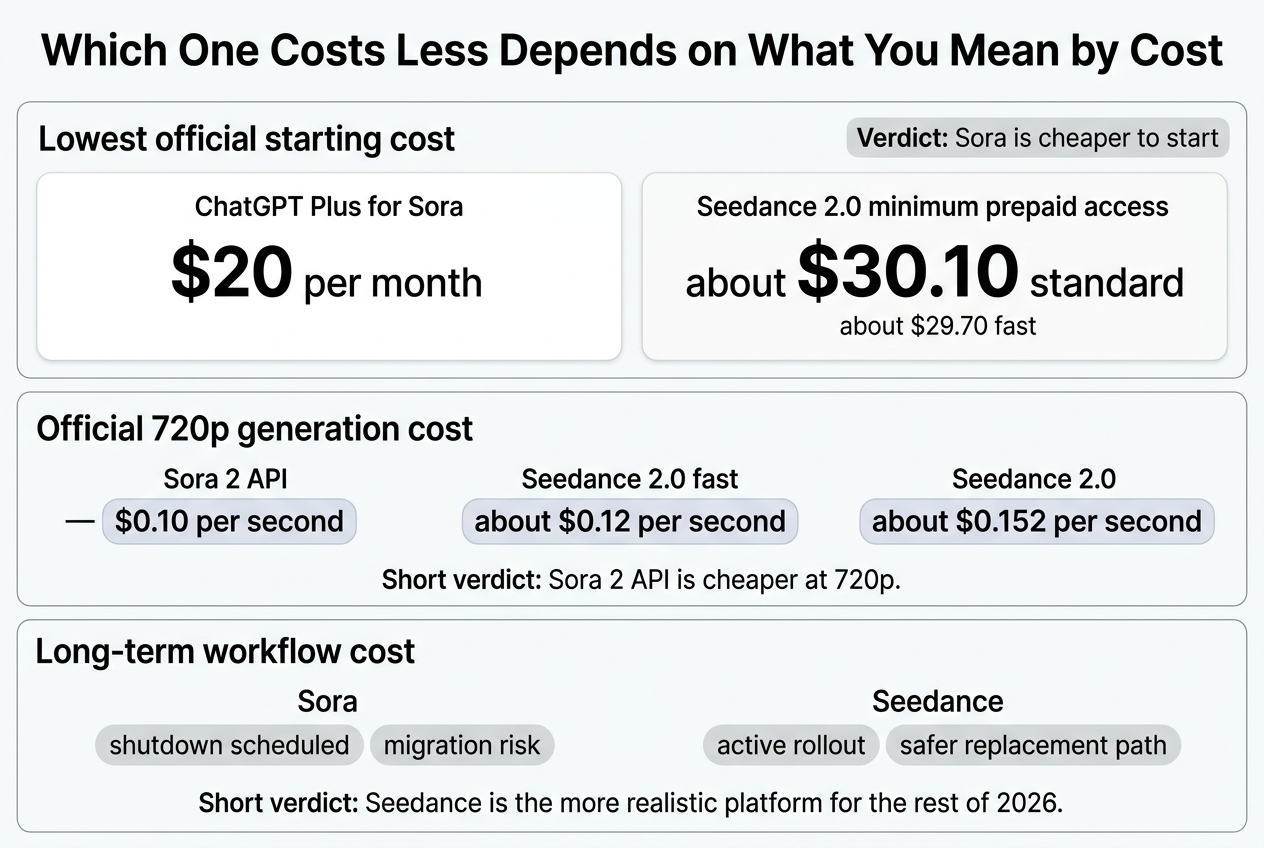

For the lowest official upfront cost, Sora is still cheaper because ChatGPT Plus costs $20 per month, while BytePlus’s official Seedance 2.0 resource-pack structure effectively starts around $30.10 for standard Seedance 2.0 or $29.70 for Seedance 2.0 fast when you use the smallest purchasable pack combination. For 720p API generation cost, Sora 2 is also cheaper on paper at $0.10 per second, compared with BytePlus’s official 5-second 720p examples of about $0.76 for Seedance 2.0 and $0.60 for Seedance 2.0 fast, which work out to roughly $0.152 and $0.12 per second. But for long-term workflow cost in the rest of 2026, Seedance is the more realistic place to rebuild because OpenAI has already published Sora’s shutdown timeline and has not named a replacement on the Sora deprecation page. (OpenAI Help Center)

That is why people keep talking past each other. One person is comparing Sora Plus versus Seedance API minimum spend. Another is comparing Sora API per-second cost versus Seedance 2.0 fast. A third is asking a business question: “Which platform should I still be building on after September?” Those three questions produce three different answers. (OpenAI Help Center)

What Sora means in April 2026

OpenAI now describes Sora 2 as available on the Sora iOS app, the Sora 2 Android app, and on sora.com, but the company also says it is “slowly enabling access” and notes that the current phase may require an invite code. The same help page says Sora 2 Pro is available to ChatGPT Pro users on the web, with app rollout to follow. In other words, Sora is not simply “open to everyone everywhere” even before its published shutdown dates arrive. (OpenAI Help Center)

OpenAI’s support page for Sora 2 web and mobile currently lists a relatively short set of supported markets, including the United States, Canada, Japan, Korea, Mexico, Taiwan, Thailand, Vietnam, and several Latin American countries. That is a meaningful operational constraint for teams that want a globally uniform workflow. (OpenAI Help Center)

The ChatGPT-linked Sora experience is also tiered. OpenAI’s Sora Billing FAQ says Plus and Business users get unlimited images and video up to 480p and 10 seconds, with one concurrent generation, while Pro users get faster generations, up to 1080p and 20 seconds, up to five concurrent generations, and watermark-free downloads. That sounds generous if you are already paying for ChatGPT, but it is still a subscription surface, not a durable standalone production lane for the remainder of 2026. (OpenAI Help Center)

OpenAI’s product and safety boundaries matter as well. The Sora app help page says it is best suited to short clips, with 10-second vertical video as the default creation mode, and says image-to-video with depictions of real people is not supported at the moment. The release notes also say known public figures remain prohibited, and that some realistic-person image-to-video generations are automatically stylized for clarity. That means some workflows people informally treated as “video editing” in Sora were never as open-ended as the hype suggested. (OpenAI Help Center)

For developers, the story is even more fragile. OpenAI’s API docs list sora-2 and sora-2-pro as deprecated models, and OpenAI’s deprecations page shows a September 24, 2026 shutdown date for both the Sora 2 video models and the Videos API, with no recommended replacement listed. That is the kind of line item procurement teams and builders should treat as a migration trigger, not a footnote. (OpenAI Developers)

Reuters added useful context in late March, reporting that OpenAI was pulling back from the Sora app while focusing resources elsewhere, with Sora’s compute footprint being one of the pressures inside the company. Even if you do not rely on unnamed-source reporting for product planning, the article helps explain why Sora no longer looks like a safe destination for a new video stack. (Reuters)

What Seedance means in April 2026

Seedance is easier to misunderstand because people often talk about it as though it were a single global app. The official ByteDance Seed materials describe Seedance 2.0 as a multimodal audio-video generation model released on February 12, 2026. The launch post says it supports four input modalities — text, image, audio, and video — and allows users to input up to 9 images, 3 video clips, and 3 audio clips at the same time. It also describes 15-second high-quality multi-shot audio-video output. That is not a small feature delta. It changes the kind of jobs the model is naturally good at. (ByteDance Seed)

ByteDance’s model page reinforces the same positioning. Seedance 2.0 is described there as supporting text, image, audio, and video inputs, with heavy emphasis on reference-based control over performance, lighting, shadow, and camera movement. For anyone whose Sora usage drifted toward character continuity, shot control, source-material-driven edits, or voice-guided video work, Seedance is closer to a structural replacement than a plain text-to-video tool would be. (ByteDance Seed)

At the same time, Seedance is not one uniform public consumer surface. TechCrunch reported on March 26 that Dreamina Seedance 2.0 was rolling out in CapCut first to users in Brazil, Indonesia, Malaysia, Mexico, the Philippines, Thailand, and Vietnam, with more markets to come. That is important because “Seedance is available” can be true in one product surface and false in another, or true in the API but not yet broadly available in a local consumer app. (TechCrunch)

For developers and teams, the more durable route is BytePlus ModelArk. BytePlus’s documentation says all listed models are currently supported in the ap-southeast-1 region, while some non-video models also appear in eu-west-1. Its international availability page lists a very broad set of supported countries and regions for BytePlus Model Service, while warning that actual versions may differ by geography. That is a very different shape from the Sora app’s smaller supported-market list. (BytePlus)

The other key difference is commercial structure. Seedance’s official developer-facing price story is not a simple monthly subscription. It is token-based, with different pricing depending on whether the input contains video, plus prepaid resource-pack options that expire and do not support refunds. That is more complex than ChatGPT Plus, but it is also more predictable for production planning if you actually know your shot counts and output specs. (BytePlus)

The cost question only makes sense after you separate entry cost from generation cost

Before looking at the numbers, it helps to define three separate questions.

The first question is how much cash you must commit before you can start. This is what matters to students, solo creators, or teams just testing a concept.

The second question is how much one clip actually costs. This is what matters to developers, agencies, and teams running repeated generations.

The third question is what it costs to keep the workflow alive for the rest of the year. This includes not just money but vendor stability, access friction, and whether you are migrating onto a surface that is being wound down.

Most side-by-side comparisons collapse all three into a single number. That is why they are often misleading.

A clean table of what you are actually buying

Product surface | What it is in practice | Current status in April 2026 | How you pay |

|---|---|---|---|

Sora through ChatGPT and Sora app | Consumer-facing creation surface tied to OpenAI accounts and plan tiers | Web and app scheduled to end April 26, 2026; access still being gradually enabled; supported only in listed markets | ChatGPT subscription |

Sora API | OpenAI developer models | Deprecated; scheduled to end September 24, 2026 | Per-second API pricing |

Seedance in CapCut and related ByteDance consumer products | Consumer rollout of Dreamina Seedance 2.0 | Phased rollout, beginning with selected markets | Product-specific, not one global public plan |

Seedance through BytePlus ModelArk | Developer and production route to Seedance 2.0 and Seedance 2.0 fast | Active, with published pricing and resource packs | Token pricing, prepaid packs, or pay-as-you-go |

The Sora status, access, and shutdown dates come from OpenAI’s Help Center and API docs. The Seedance product description, rollout status, and developer-access structure come from ByteDance Seed, BytePlus ModelArk, and TechCrunch’s rollout reporting. (OpenAI Help Center)

Which one has the lower upfront cost

If the question is simply, “What is the cheapest official way to start experimenting today,” Sora still wins on the upfront number.

OpenAI’s ChatGPT Plus plan is $20 per month. On the Sora side, that gives users access to video generation within the plan limits OpenAI publishes for Plus. OpenAI’s Pro tiers now sit above that at $100 and $200, but the $20 Plus tier is the cheapest official Sora entry point if you can actually access the product surface before the shutdown window closes. (OpenAI Help Center)

BytePlus’s Seedance 2.0 resource-pack math starts higher. BytePlus documents a 1-million-token Seedance 2.0 pack at $4.3, but says at least 7 such packs must be purchased, which makes the smallest standard Seedance 2.0 prepaid block about $30.10. For Seedance 2.0 fast, the 1-million-token pack is $3.3, but at least 9 must be purchased, which puts the smallest fast-model prepaid block at about $29.70. (BytePlus)

That means the answer to the lowest-entry-cost question is straightforward: Sora Plus is cheaper to start with than official Seedance 2.0 resource packs. That is true even before you get into clip math. It is also the part many “Seedance is cheaper” claims leave out. (OpenAI Help Center)

There is one catch. Lower entry cost is not the same as lower long-term cost. A $20 subscription tied to a service that is already scheduled for shutdown is not automatically the better economic choice for a team that has to keep producing in Q3 and Q4. That is why the next layer matters more than the sticker price alone. (OpenAI Help Center)

Which one is cheaper per clip

This is where the headline flips.

OpenAI’s current developer pricing page lists Sora 2 at $0.10 per second for 720p generation. It lists Sora 2 Pro at $0.30 per second for 720p, $0.50 per second for 1024p, and $0.70 per second for 1080p. If you only care about the official API lane, Sora 2 has a very simple price signal. (OpenAI Developers)

BytePlus’s official pricing docs are more layered. For Seedance 2.0, online inference pricing varies depending on whether your input contains video. For the standard model, BytePlus lists $7.0 per million tokens when no video is in the input and $4.3 per million tokens when video is included. For Seedance 2.0 fast, it lists $5.6 per million tokens with no video input and $3.3 per million tokens when video is included. BytePlus then publishes example clip prices. In its 5-second 720p, no-video-input example, Seedance 2.0 is about $0.76 per video and Seedance 2.0 fast is about $0.60 per video. (BytePlus)

That works out to about $0.152 per second for Seedance 2.0 and $0.12 per second for Seedance 2.0 fast in that example. Sora 2 API at 720p, by comparison, is $0.10 per second. So if you are comparing official API cost for 720p generation, Sora 2 API is cheaper than Seedance 2.0 fast, and clearly cheaper than standard Seedance 2.0. (OpenAI Developers)

That point matters because a lot of people intuitively assume Seedance must be the cheaper engine because it is newer on the market and often discussed as a more accessible alternative. Official pricing does not support that as a universal claim. On a pure 720p API basis, it is not the cheaper one. (OpenAI Developers)

The price trap most people miss with Seedance

The most useful nuance in BytePlus’s pricing docs is not the list price. It is the input-mode distinction.

BytePlus explicitly says Seedance 2.0 pricing varies depending on whether the input contains video, and it publishes separate example ranges for video-input jobs. In the 5-second 720p example with video included, Seedance 2.0 costs about $0.84 to $1.86 per video, depending on input-video length. The docs also note minimum token-consumption limits for the video-input mode. So if your migration from Sora involves remixing, continuation, or video-based reference workflows, your real Seedance cost may be materially higher than the headline number people quote from simple text-to-video examples. (BytePlus)

This is where surface-level blog posts become actively unhelpful. A creator reading “Seedance is cheaper than Sora” may assume that all Seedance jobs are cheaper. But the moment you start using the features that make Seedance a better practical replacement — multimodal references, continuation, video-conditioned generation — you may move into a more expensive pricing path. The model can be more useful and still not be cheaper per clip. Those are separate questions. (ByteDance Seed)

BytePlus’s prepaid model adds a second trap: the packs expire after 90 days, they are non-refundable, and if your packs are exhausted or expired, usage can automatically switch to pay-as-you-go. That is not a deal-breaker, but it means Seedance budgeting has to be done like production budgeting, not like a casual creator subscription. (BytePlus)

A practical price table that is actually useful

Comparison point | Official number | What it means in practice |

|---|---|---|

ChatGPT Plus | $20 per month | Cheapest official Sora entry point if you can still access the relevant Sora surface |

ChatGPT Pro tiers | $100 and $200 | Higher Sora-related access tiers with more headroom, but not the cheapest route |

Sora 2 API 720p | $0.10 per second | Lowest official per-second number in this comparison set |

Sora 2 Pro API 720p | $0.30 per second | Higher-fidelity Sora lane, but much more expensive than base Sora 2 |

Seedance 2.0 5-second 720p example, no video input | $0.76 per clip | About $0.152 per second |

Seedance 2.0 fast 5-second 720p example, no video input | $0.60 per clip | About $0.12 per second |

Seedance 2.0 minimum prepaid block | About $30.10 | Higher starting cost than ChatGPT Plus |

Seedance 2.0 fast minimum prepaid block | About $29.70 | Still higher starting cost than ChatGPT Plus |

The OpenAI numbers come from official plan and API pricing documents. The Seedance numbers come from BytePlus’s official ModelArk pricing and resource-pack documentation. Arithmetic conversions are calculated directly from those published values. (OpenAI Help Center)

One simple way to think about this is with rough break-even intuition. A ChatGPT Plus month costs $20. At official 720p API rates, that is roughly the cost of 40 five-second Sora 2 API clips, about 33 five-second Seedance 2.0 fast clips, or about 26 five-second standard Seedance 2.0 clips in BytePlus’s no-video-input example. Those are not apples-to-apples purchasing models, but they help explain why some users feel Sora is cheaper while others feel Seedance is the better operating platform. (OpenAI Help Center)

Which one costs less over the rest of 2026

If you are a solo creator making a handful of short clips in the next week, the cheapest path may still be Sora Plus if you already have access. If you are a developer who only cares about 720p API cost until late September, Sora 2 API is cheaper on paper. But if you are rebuilding a workflow that needs to survive the rest of 2026, the answer changes: Seedance is the lower-risk cost structure because it is not the platform with a published shutdown date. (OpenAI Help Center)

That matters more than many buyers admit. Migration cost is not just what you pay the vendor. It is also what you pay in re-testing prompts, re-exporting assets, re-training collaborators, reworking integrations, and re-explaining the stack to clients or stakeholders. OpenAI’s deprecation page is unusually stark here because it lists no recommended replacement for Sora 2 or the Videos API. That is not the posture of a product you should be newly standardizing on. (OpenAI Developers)

By contrast, ByteDance and BytePlus are still broadening the Seedance footprint. ByteDance is integrating Dreamina Seedance 2.0 into CapCut and related surfaces, and BytePlus is documenting the model, its pricing, and its API behavior in enough detail for real implementation. That does not make Seedance perfect or universally cheaper, but it does make it a more actionable migration target. (TechCrunch)

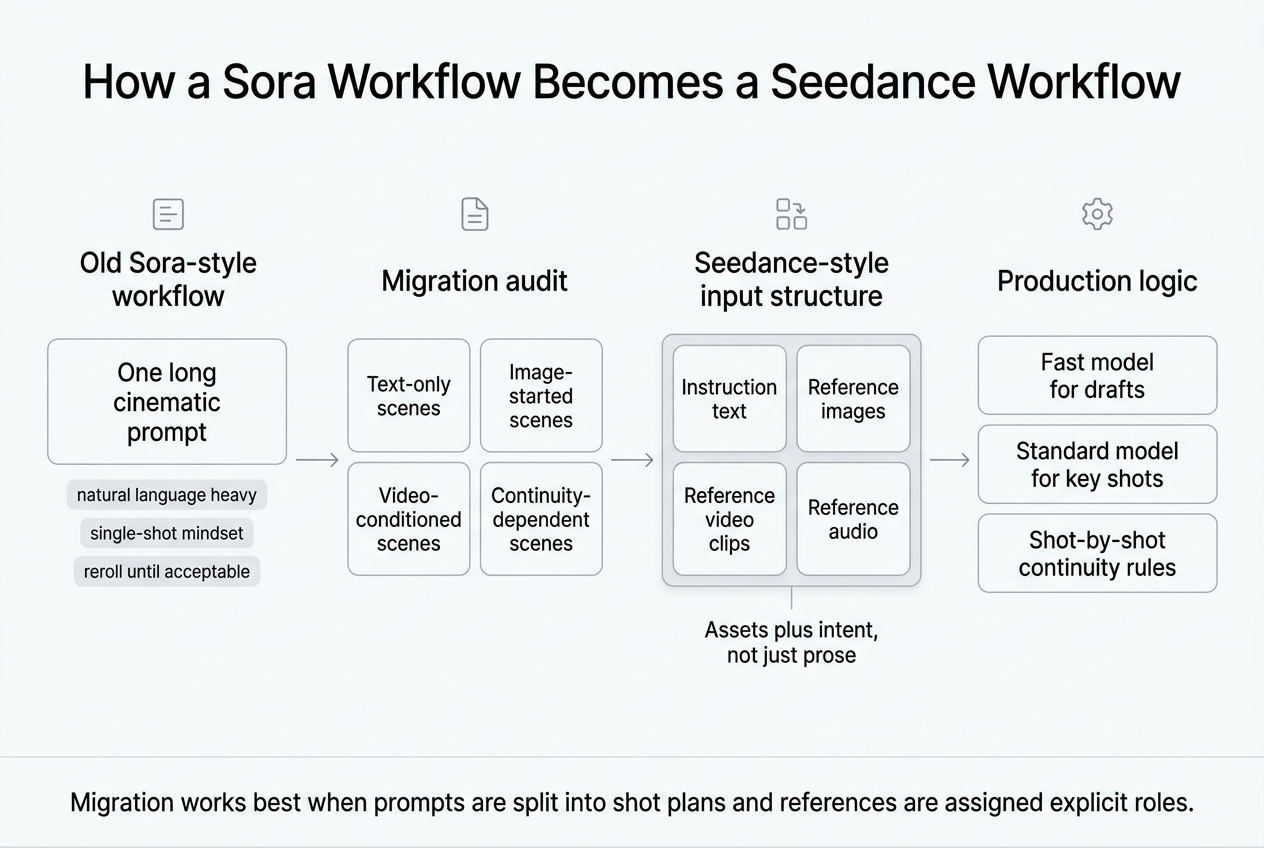

Seedance is a better replacement only if your workflow actually needs what it is good at

Seedance is not “better than Sora” in some totalizing sense. The useful question is whether it is a better replacement for the way you actually work.

If your old Sora usage was basically this — type a scene, generate a short clip, retry a few times, download the best result — then what you probably valued most was simplicity. In that narrow use case, Sora’s subscription-style entry point was easier to understand. You did not need to think about token packs, input-mode pricing, or reference-material strategy. (OpenAI Help Center)

If your Sora usage had already grown into something more production-oriented — keeping a character stable across shots, steering camera movement, syncing against audio, or using multiple references to direct a scene — then Seedance is much closer to the tool you were looking for. ByteDance’s official launch materials put multimodal references at the center of the product, not on the margin, and the model’s published interface accepts text, images, audio, and video as first-class inputs. That changes the creative grammar of the tool. (ByteDance Seed)

This is also why “replace Sora with Seedance” is not always the right mental model. In many cases, the real transition is from a single-prompt video toy to a reference-driven short-form production workflow. Seedance happens to be a better fit for the second category. Users who never wanted that extra control may find the move annoying rather than empowering. (ByteDance Seed)

The strongest feature difference is not quality hype, it is input structure

A lot of comparison pages get trapped in vague language about realism, cinematic output, or “physics.” That is not where the most useful distinction is.

The most operationally important difference is that Seedance 2.0 officially supports four input modalities and a large number of references in one generation flow. ByteDance says users can combine up to 9 images, 3 video clips, and 3 audio clips with natural-language instructions. OpenAI’s Sora 2 API docs, by contrast, describe Sora 2 and Sora 2 Pro as taking text and image inputs and returning video plus audio. The Sora app allows users to start from text and can also accept uploaded assets in the app flow, but the developer-side API story is narrower than Seedance’s multimodal structure. (ByteDance Seed)

That means Seedance is naturally stronger for jobs like these: keeping the same person or object identity across multiple shots, steering the scene with reference clips, syncing a generated sequence against supplied audio, and using source material as more than a loose stylistic hint. None of those advantages guarantee a lower bill, but they do make the model a more realistic migration target for users leaving Sora because they needed more control. (ByteDance Seed)

Neither one should be treated as a one-click long-form video engine

There is another practical limit worth stating plainly: both Sora and Seedance are better understood as short-clip systems, not one-click replacements for long-form editing suites.

OpenAI’s current Sora help and billing docs focus on 10-second defaults, up to 20 seconds in the editor, and 15- or 25-second options depending on surface and plan. ByteDance’s Seedance 2.0 launch materials talk about 15-second multi-shot output, and BytePlus’s Seedance 2.0 tutorial lists output durations in the 4-to-15-second range depending on model and setup. If your mental model is “I used Sora to make a minute-long cinematic scene and I want Seedance to do the same in one pass,” you are framing the problem the wrong way. Both tools work better when you think in shots, not finished films. (OpenAI Help Center)

That is why the migration question should be less about raw duration and more about shot planning. A short-form, multi-shot workflow is where Seedance’s reference system becomes genuinely useful.

How to migrate a Sora workflow to Seedance without wasting money

The most efficient migration starts with an audit, not a prompt rewrite.

First, separate your old Sora jobs into four buckets: simple text-to-video scenes, image-started scenes, remix or continuation jobs, and jobs that depended on character or audio consistency. If most of your old library lives in the first bucket, you are moving from a simpler prompting culture into a more structured one. If most of it lives in the last three buckets, you are probably moving toward a tool that fits your needs better even if the interface and billing logic feel heavier at first. The goal is not to port prompts word for word. The goal is to port the real control logic behind them.

Second, stop thinking in single prompts and start thinking in assets plus intent. Seedance’s strength is not that it hears adjectives better. Its strength is that it can use multiple references with explicit directions. Instead of trying to cram “same hero, same jacket, same alley, same pacing, same voice, camera dollies left, keep rain continuity” into one block of prose, decide which parts belong in images, which belong in a reference clip, which belong in audio, and which belong in the instruction text.

Third, use the cheaper lane for drafts. BytePlus publishes both Seedance 2.0 and Seedance 2.0 fast. If you are storyboarding, testing composition, or validating whether your reference set is coherent, fast is the sensible first pass. Save the standard model for the few shots that need better finishing or where fast repeatedly misses the target. That is often a cheaper migration strategy than trying to perfect every clip on the highest-fidelity setting from the start. (BytePlus)

Fourth, budget differently for video-conditioned jobs. If you are using video input, do not assume your costs will look like the no-video example rate. BytePlus’s own examples show higher price ranges once video is included in the input. That means teams doing continuation, edit, or transformation work should run a small pilot and inspect real usage before setting client-facing pricing or internal quotas. (BytePlus)

Fifth, preserve your successful outputs as a reference library. The better your input discipline becomes, the less you rely on “maybe the next reroll gets it right.” That is where Seedance can become more economical in practice than its raw per-second price suggests. A more controllable pipeline can lower the number of failed generations you have to pay for.

A simple example of how the prompt logic changes

A lot of Sora-style prompts are written like richly descriptive film paragraphs. That can still work in Seedance, but it often leaves value on the table.

A typical Sora-style starting point might look like this:

A young woman in a yellow raincoat walks through a neon-lit alley at night. The camera slowly moves backward as she looks over her shoulder. Rain reflects pink and blue signs in the puddles. Natural footsteps, distant traffic, soft synth ambience.

That is concise and readable, but it places almost all control in natural language. A Seedance-oriented version should be more explicit about role assignment:

Goal: 6-second opening shot for a short cyberpunk sequence.

Reference image 1: keep the protagonist’s face, yellow raincoat, and wet hair styling.

Reference image 2: use the alley color palette, sign density, and puddle reflections.

Reference video 1: match the backward dolly pacing and slight handheld micro-shake.

Reference audio 1: use the ambient synth mood and distant traffic profile.

Instructions:

Single continuous shot.

Protagonist enters frame center, walking toward camera.

Camera tracks backward smoothly at medium speed.

Keep rain visible in foreground and puddle reflections sharp.

No crowd. No umbrellas. No text overlays.

Audio should prioritize footsteps, light traffic, and soft synth texture.

End with a brief shoulder glance toward screen right.

The point is not that Seedance requires robotic formatting. The point is that reference intent should be declared, not implied. That often reduces wasted generations because the model is not guessing which part of your prompt matters most.

For multi-shot jobs, it helps to write each shot as a separate unit with a continuity rule:

Shot 1

5 seconds

Wide shot

Establish alley, protagonist approaching, backward camera move

Continuity rules

Same raincoat, same hair, same signage color palette, same wet pavement texture

Shot 2

4 seconds

Medium close-up

Shoulder glance, slow push-in, stronger footsteps, quieter traffic

That kind of structure is also easier to hand off to collaborators than a single paragraph prompt.

Common migration mistakes that make Seedance look worse than it is

One common mistake is trying to port Sora prompts literally. If your old workflow was built around “beautiful prompt prose,” you may conclude that Seedance is inconsistent when the real issue is that you are not using its reference model. Seedance tends to reward asset discipline more than adjective stacking.

Another mistake is treating the fast model as final output and then deciding the platform is mediocre. Fast should usually be your planning layer, not your prestige export layer. If the fast model solves composition, motion logic, and continuity for your draft, it has already done useful work.

A third mistake is forgetting the cost effect of video input. If you add video references everywhere because it feels powerful, you may accidentally move the project into a more expensive operating pattern than necessary. Sometimes an image plus better instruction text is enough.

A fourth mistake is rebuilding the old workflow instead of improving it. If Sora trained you to over-rely on repeated rerolls, the real gain in moving to Seedance may come from generating fewer but more directed attempts, not from chasing a lower cost-per-second number.

Where Sora was still the easier buy

Sora’s value proposition, while it lasted, was easy to explain to a broad audience. You could point people to a familiar OpenAI-linked surface, a recognizable monthly plan, and a short-clip product that behaved more like a consumer app than a production system. For non-technical users, that simplicity was real. (OpenAI Help Center)

That is why some teams will reasonably say Sora felt cheaper even if the official API math says otherwise. Subscription software often feels cheaper when it hides complexity, especially early in adoption. A creator paying $20 a month and casually producing clips inside an existing account may not care that an API somewhere has a different per-second cost model. Convenience is part of perceived price.

But that was always a fragile advantage. Once the product surface is scheduled to disappear, simplicity stops being a strategic benefit and starts looking like temporary comfort.

Where Seedance is the more realistic replacement

Seedance becomes the obvious replacement when three conditions are true.

The first is that you need video generation to remain part of your workflow after Sora’s published shutdown dates. That alone disqualifies Sora as the place to build something new. (OpenAI Help Center)

The second is that your work benefits from reference-heavy control. If you are doing product explainers, ad concepts, short-form narrative sequences, performance-driven shots, or character continuity work, Seedance’s multimodal structure makes more sense than treating video creation as a pure text-output problem. (ByteDance Seed)

The third is that you are willing to manage production logic rather than just prompts. Seedance is not the path of least cognitive effort. It is the path of more explicit control.

That control also changes how teams think about stack design. Many migration problems are not really “which video model is best” problems. They are “why are writing, research, image generation, comparison, and video generation spread across six dashboards” problems. GlobalGPT presents itself as an all-in-one AI platform for writing and creation, which is relevant if your Sora replacement process is really a broader multi-model workflow problem rather than a single-renderer decision. Its homepage positions the product around bringing multiple advanced AI models into one workspace. (GlobalGPT)

That kind of workspace can be useful when the actual job looks like this: draft a script, compare two phrasings, summarize source material, create a still reference image, and then send the result into a video model. In that situation, the operational savings from a unified environment can matter as much as the price difference between one vendor’s 720p API and another’s. This is especially true for small teams that do not want separate billing, separate exports, and separate prompt histories for every stage of the job. (GlobalGPT)

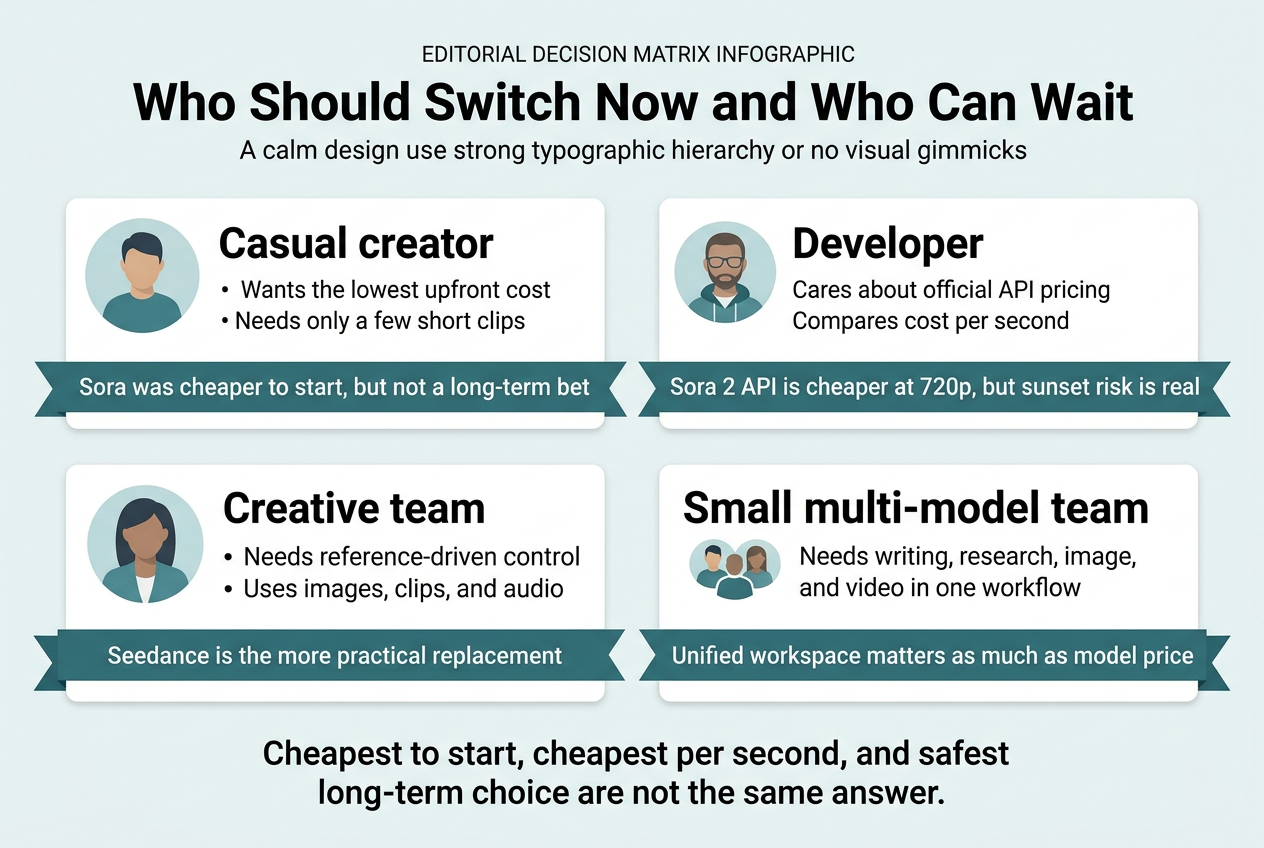

Who should switch now, and who can wait

A developer who only needs 720p API generation for a short-lived prototype can make a rational case for staying on Sora temporarily because the published per-second API rate is lower. That is a narrow but defensible use case, especially if the prototype ends well before the September shutdown. (OpenAI Developers)

A creator or teacher who only wanted the lowest-friction way to generate a few clips may still find Sora’s subscription model simpler, but only if they already have access and do not mind the near-term product sunset. For new commitments, that simplicity is increasingly hard to justify. (OpenAI Help Center)

A marketer, small production team, or founder building repeatable creative workflows should be looking at Seedance now, not because it is always cheaper, but because it is structurally better suited to reference-driven work and is not the product line being dismantled. (ByteDance Seed)

A student or researcher who needs one platform for writing, summarizing, comparing, and visual creation should also think beyond a pure model-versus-model benchmark. The bottleneck is often workflow fragmentation, not raw model quality.

The honest conclusion

There is no factual basis for saying “Seedance is simply cheaper than Sora in 2026.” That is too broad to be true.

If the question is lowest official upfront cost, Sora still wins through ChatGPT Plus at $20 per month, while official Seedance 2.0 access through BytePlus resource packs starts closer to $30. (OpenAI Help Center)

If the question is current official 720p API cost, Sora 2 also wins on paper at $0.10 per second, compared with BytePlus’s official 5-second examples that put Seedance 2.0 fast around $0.12 per second and standard Seedance 2.0 around $0.152 per second. (OpenAI Developers)

If the question is what should replace Sora for the rest of 2026, Seedance is the more realistic answer because Sora’s shutdown dates are already published and OpenAI has not named a direct successor in its deprecation documentation. (OpenAI Help Center)

So the mature answer is this: Sora was cheaper to start with, Sora API is cheaper at 720p, but Seedance is the safer platform to move toward if you need an actual replacement instead of a temporary stopgap. That is not a slogan. It is what the published product and pricing documents support as of April 16, 2026. (OpenAI Help Center)

Further reading and reference links

For OpenAI’s current Sora status and pricing, the most useful starting points are OpenAI’s Sora discontinuation notice, Sora Billing FAQ, Sora 2 API model page, Sora 2 Pro API model page, and the OpenAI API deprecations page. (OpenAI Help Center)

For Seedance, the highest-value primary sources are ByteDance Seed’s official Seedance 2.0 launch post, the Seedance 2.0 model page, BytePlus ModelArk’s pricing page, Seedance 2.0 resource-pack documentation, and the international availability page. (ByteDance Seed)

For recent market context, TechCrunch’s report on Dreamina Seedance 2.0 coming to CapCut and Reuters’ report on OpenAI dropping Sora are both useful reads. (TechCrunch)

For related reading inside glbgpt.com, the most relevant pages are the GlobalGPT homepage and How to Use Seedance 2.0 as a Director-Level AI for Multi-Shot Videos. (GlobalGPT)