DeepSeek V4 Alive on GlobalGPT: Access 1.6T Pro Model Now!

DeepSeek V4 is officially here, shattering industry expectations with a 1.6 trillion parameter architecture and a massive 1 million token context window. However, many professional users (2026) report significant hurdles with the official API, particularly regarding intermittent API timeouts during peak hours and the high inference latency required for complex reasoning tasks. While the V4-Pro model is a powerhouse, these technical friction points can stall professional development cycles and disrupt real-time AI workflows.

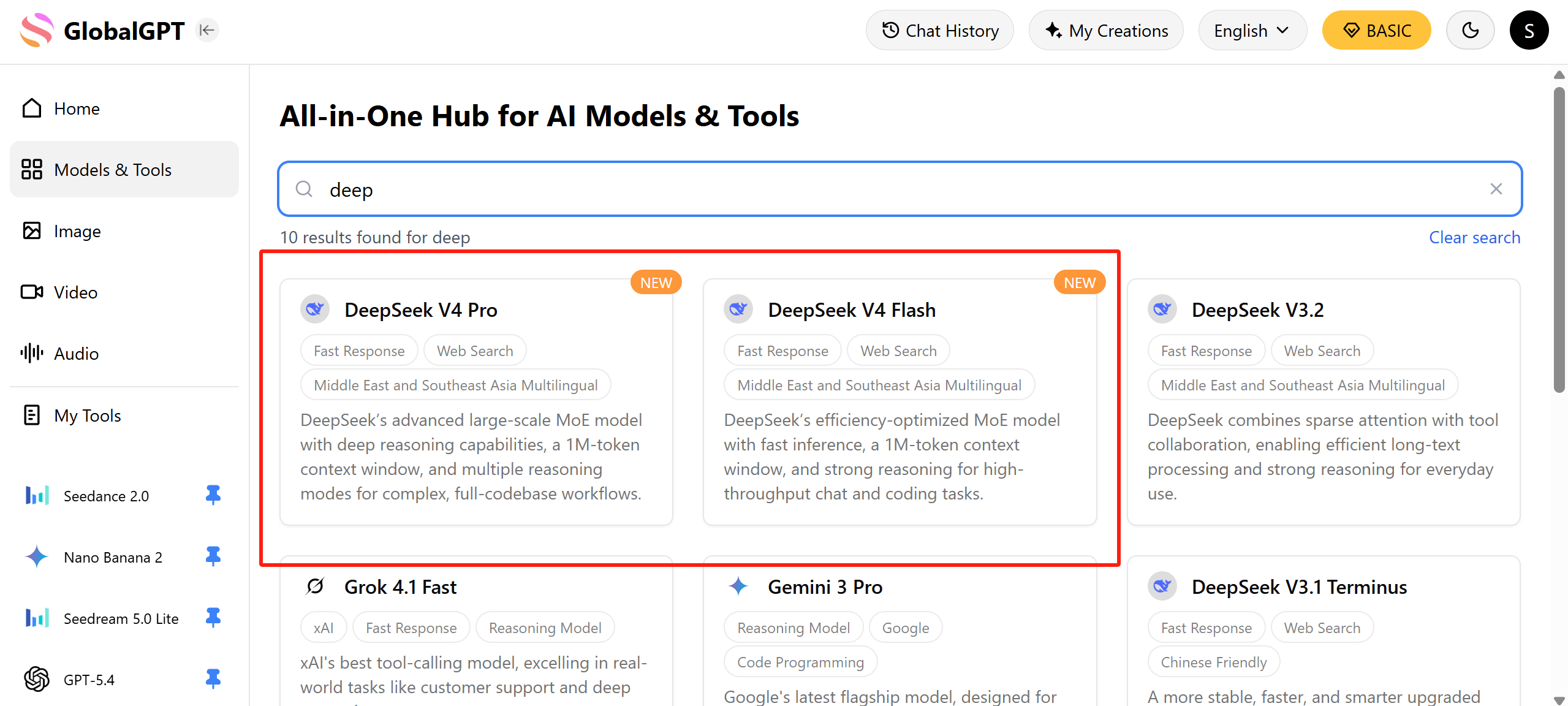

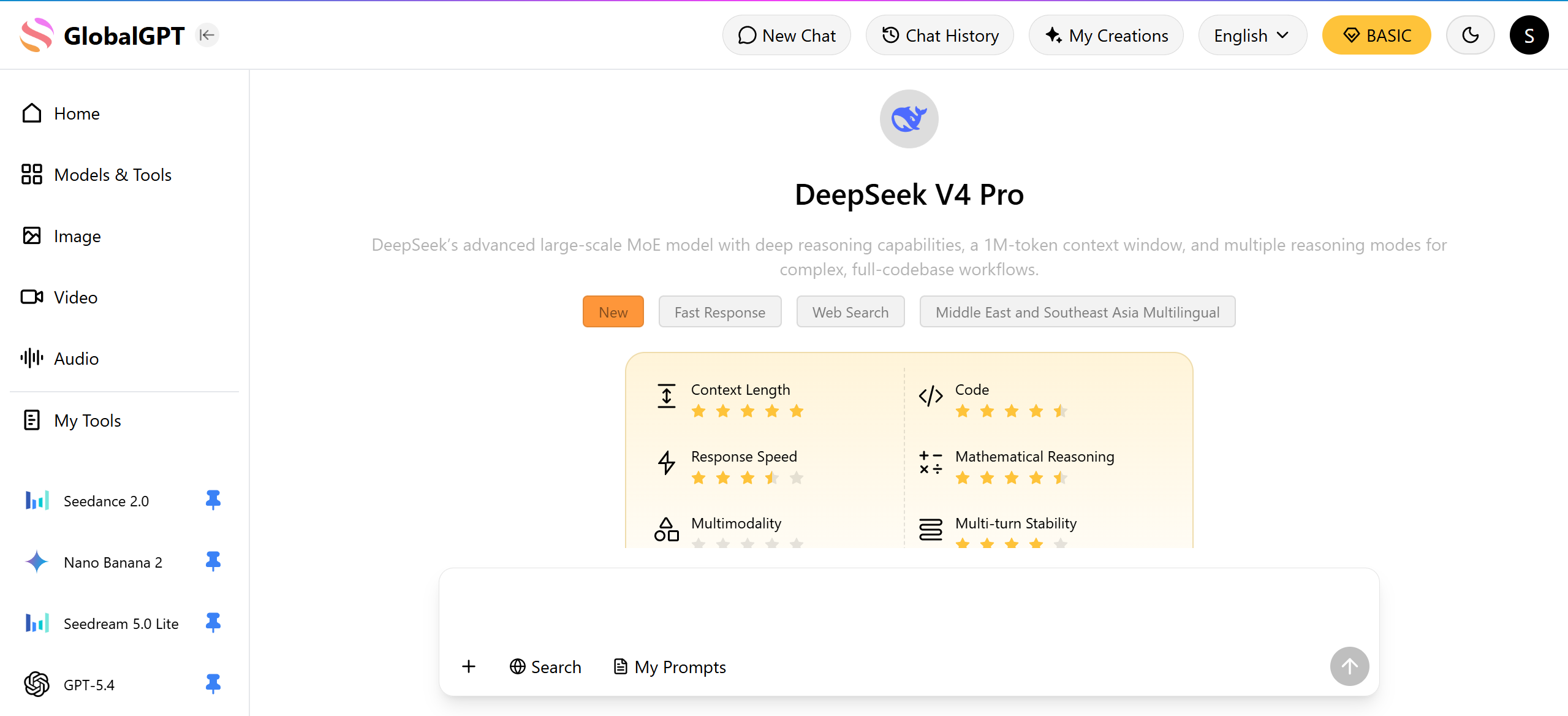

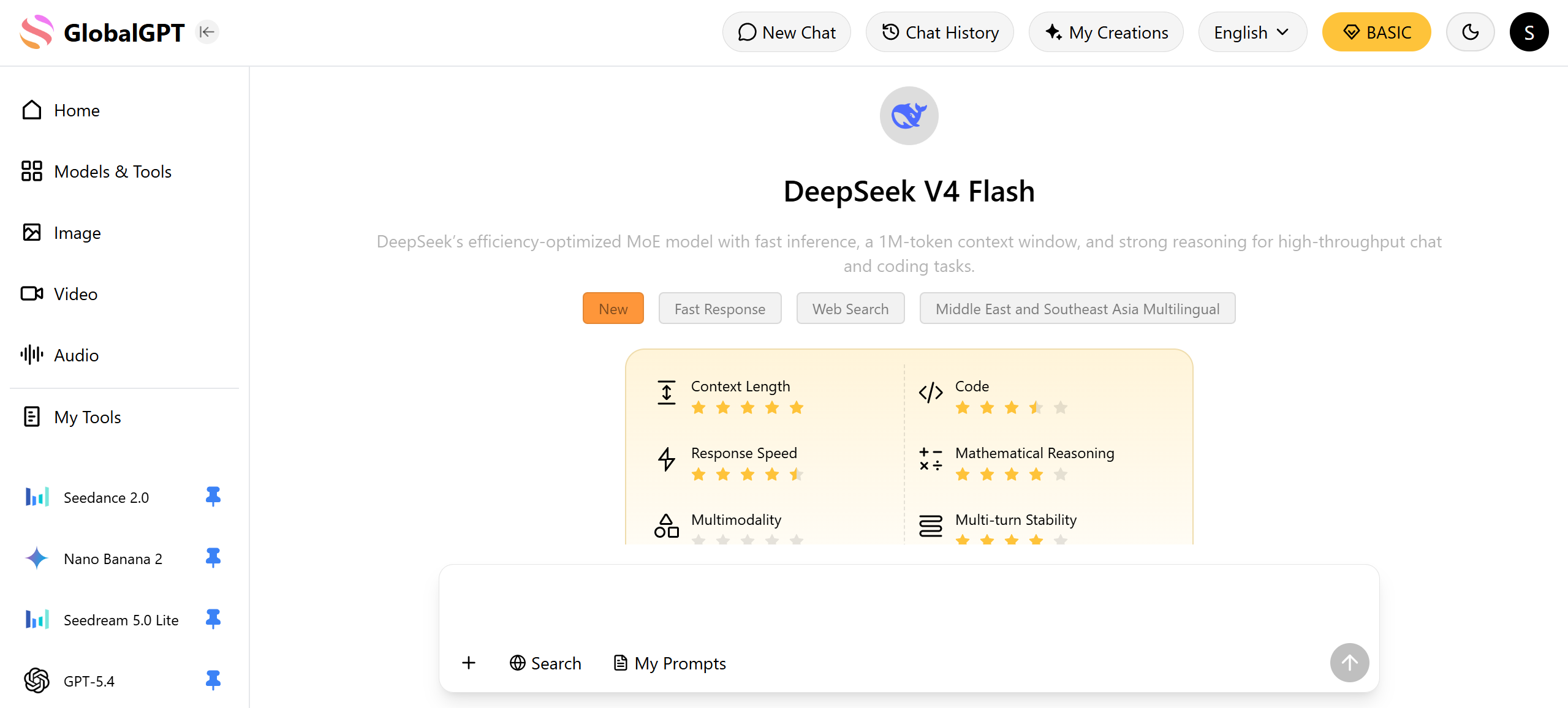

To solve these frustrations, GlobalGPT has fully integrated the DeepSeek V4 family into its streamlined dashboard, offering a stable, high-speed environment for immediate deployment. You can now access the flagship DeepSeek V4-Pro for deep reasoning, the ultra-fast V4-Flash for real-time logic, and the V4-Vision native multimodal model without worrying about regional IP blocks or complex API key management. For power users focused on large language models, the Basic Plan at only $5.8 provides an unbeatable entry point, while creative pros can unlock the full potential of V4-Pro through our $10.8 Pro Plan, which includes advanced video and image capabilities.

Beyond simple chat, GlobalGPT empowers you to cover the complete project workflow by bridging world-class reasoning with visual production. Once DeepSeek V4-Pro refines your logic or code, you can instantly transition to Sora 2 Flash, Veo 3.1, or Kling for video generation, or use Midjourney and Nano Banana 2 for photorealistic imagery—all within the same interface. This ecosystem eliminates the need for five different $20 subscriptions, allowing you to move from initial research to final 4K video production in one seamless, cost-effective experience

What is DeepSeek V4? The New Frontier of Open-Source AI

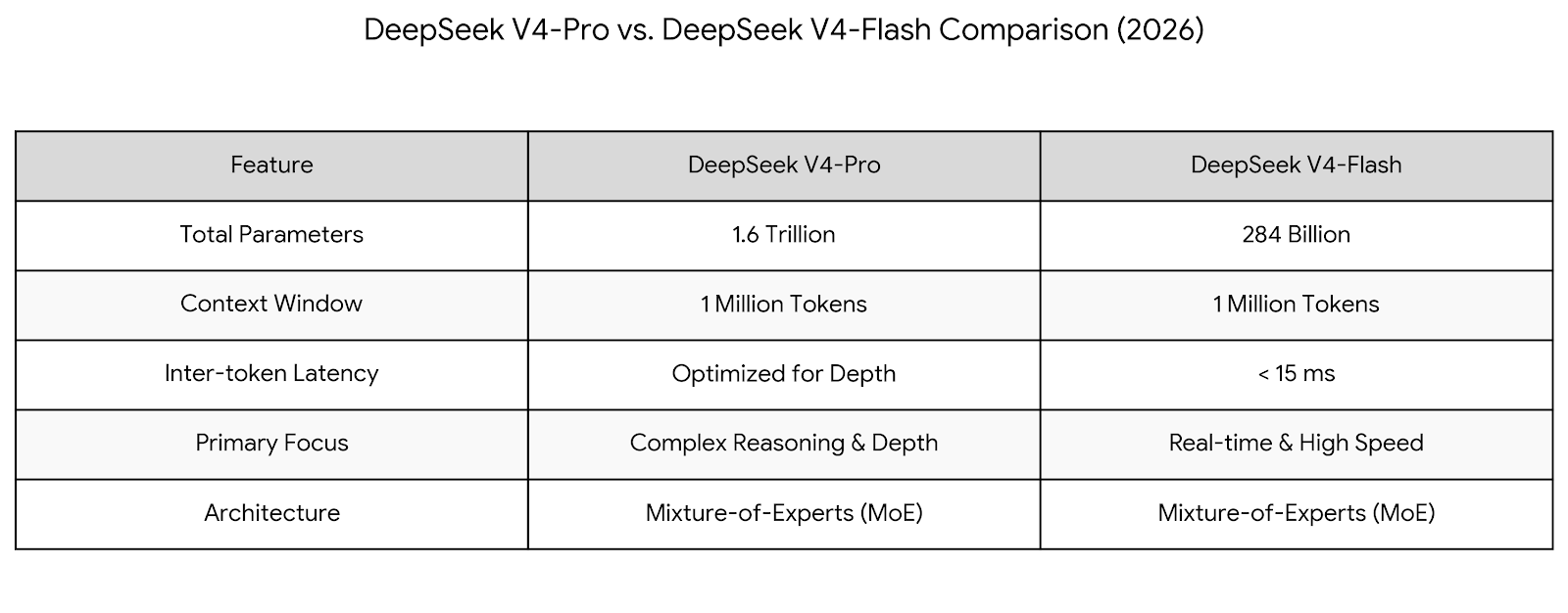

DeepSeek V4 represents a massive generational leap, pushing the boundaries of what open-source AI can achieve. Released in late April 2026, the model architecture is built on a sparse Mixture-of-Experts (MoE) system scaled to a staggering 1.6 trillion total parameters for the Pro version.

One of the most disruptive features is the 1 million token context window, now officially supported across both Flash and Pro tiers. This allows developers and researchers to upload entire code repositories or multi-year document archives directly into the prompt without needing complex Retrieval-Augmented Generation (RAG) layers. Furthermore, V4 is natively multimodal, meaning it was trained from the ground up to understand text, vision, and audio simultaneously, rather than bolting vision modules onto a text-only base.

Why You Should Use DeepSeek V4 on GlobalGPT Today

While the raw power of DeepSeek V4 is undeniable, accessing it through official channels often presents significant hurdles for international users. GlobalGPT removes these barriers by providing a stable, unified dashboard.

Bypass Regional & Payment Restrictions: Many users struggle with the DeepSeek API's regional IP blocks or the requirement for specific Chinese payment methods. GlobalGPT works globally, accepting international cards without the need for a VPN.

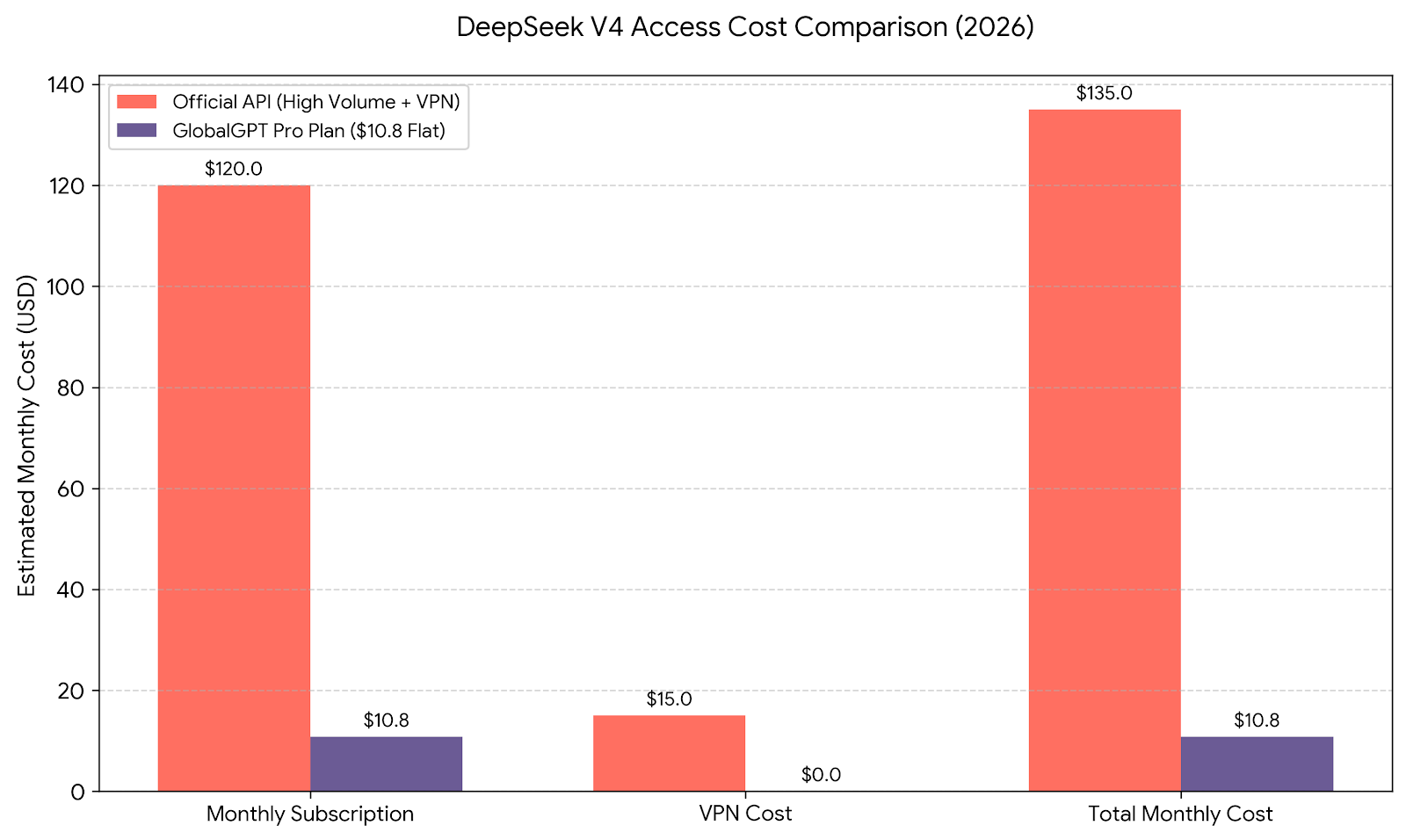

Predictable Pricing vs. "Credit Anxiety": Instead of worrying about unpredictable token billing that can spike during long-context tasks, GlobalGPT offers a clear, flat-rate subscription model.

Workflow Synergy: On GlobalGPT, DeepSeek V4 serves as the "brain" of your project. You can use V4-Pro to draft a complex video script and then immediately send that output to Sora 2 or Veo 3.1 for production—all within one tab.

DeepSeek V4 Performance Benchmarks (April 2026)

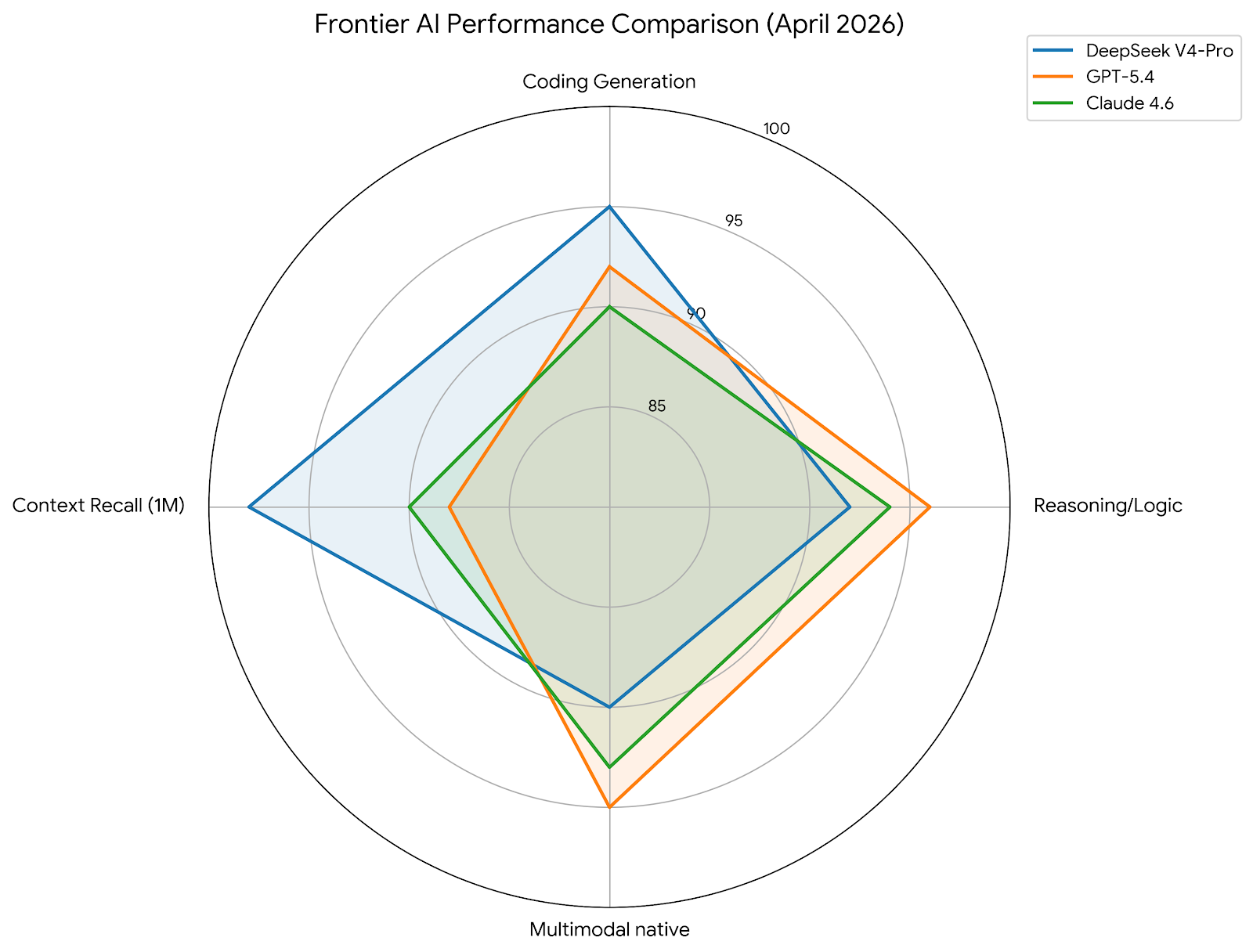

DeepSeek V4-Pro has quickly ascended the leaderboards, challenging the dominance of Western labs. Early evaluations place V4-Pro at approximately 88.5% on the MMLU benchmark, a significant improvement over V3’s 85.5%.

In coding-specific tasks, the results are even more impressive. V4-Pro’s specialized MoE pathways allow it to achieve near-perfect scores on HumanEval, often outperforming Claude 4.6 and GPT-5.4 in generating optimized, bug-free Python and Rust code. For real-time applications, V4-Flash maintains an inter-token latency of under 15 milliseconds, making it ideal for live chat agents and real-time function calling.

Technical Deep-Dive: Engram Memory & Huawei Optimization

The efficiency of DeepSeek V4 is largely attributed to its "algorithm-hardware co-design".

Engram Conditional Memory: This technology separates static knowledge (like historical facts) into a lookup table while reserving the MoE neural backbone for dynamic reasoning. This reduces the computational load by "not calculating what the model can simply look up".

Huawei Ascend 950PR Integration: V4 is the first flagship model optimized specifically for Chinese domestic silicon. By leveraging Huawei’s super-nodes, DeepSeek achieves world-leading cost efficiency that allows GlobalGPT to pass those savings on to you.

People Also Ask (PAA)

Can I use DeepSeek V4 without a specialized Chinese GPU?

Yes. While DeepSeek V4 is specifically optimized for Huawei Ascend 950PR chips , it remains fully compatible with Western hardware like NVIDIA and AMD. However, the most efficient way to access V4-Pro—without worrying about hardware optimization or local setup—is through GlobalGPT. We handle the backend infrastructure using optimized "kernels" adapted for both Nvidia and Huawei chips to ensure you get the highest throughput regardless of your local machine's specs.

How does DeepSeek V4’s 1M context handle large codebases?

The 1 million token context window is a game-changer for software engineering. Powered by Engram Conditional Memory and DeepSeek Sparse Attention, the model can maintain near-perfect recall across an entire repository. Instead of relying on RAG (which can miss nuances), you can feed thousands of lines of code directly into the model to identify bugs, refactor architecture, or generate documentation with complete global awareness of the project.

Is GlobalGPT the most affordable way to access V4-Pro in the US/EU?

Absolutely. Using the official DeepSeek API from the US or EU often involves VPN overhead and complex international payment verification. Furthermore, official token-based pricing can become expensive for the heavy usage that a 1.6T model demands. At just $10.80 per month for the Pro Plan, GlobalGPT provides a flat-rate, high-performance gateway that undercuts official subscription costs and eliminates the "credit anxiety" common with other providers.

Conclusion: Elevate Your Workflow with DeepSeek V4 on GlobalGPT

GlobalGPT is the most affordable and powerful way to use DeepSeek V4-Pro alongside 100+ major AI models through a single, easy-to-use account. By integrating this frontier-class 1.6T model into your daily routine, you aren't just getting a better chatbot—you're gaining an end-to-end production partner that bridges the gap between deep logical reasoning and high-fidelity visual content. Whether you are debugging massive codebases or generating 4K video, GlobalGPT ensures you have the world’s best AI at your fingertips for one predictable price.